Description

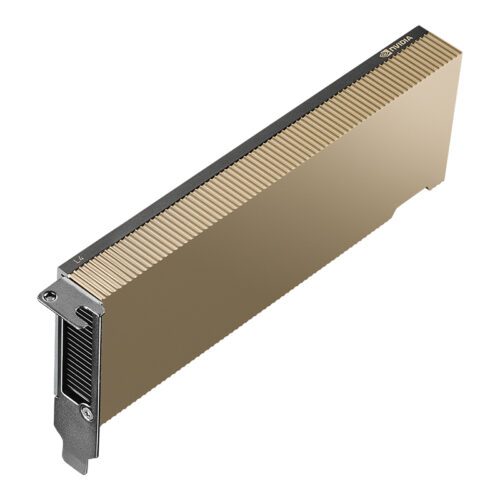

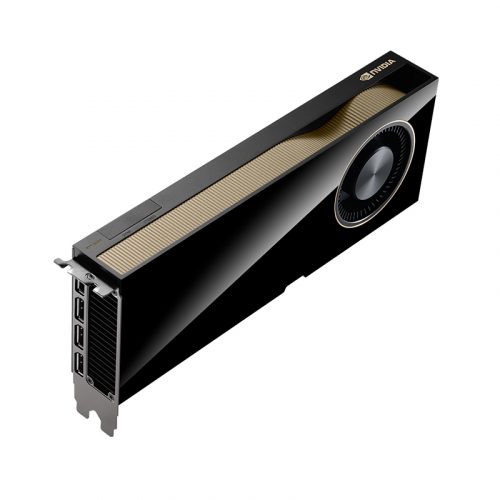

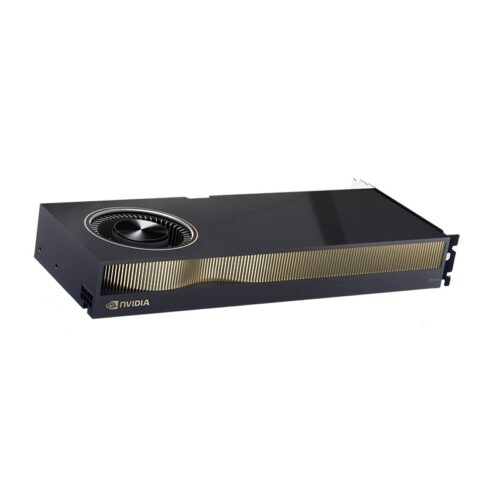

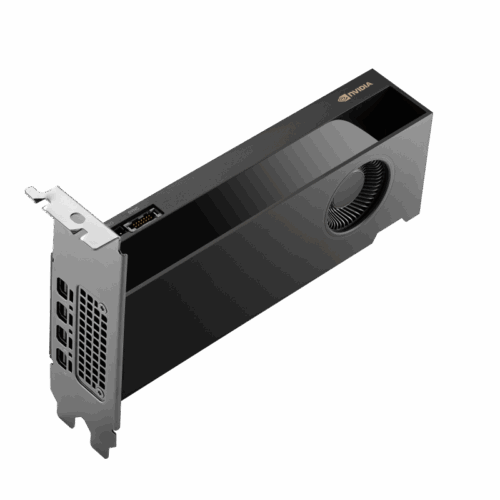

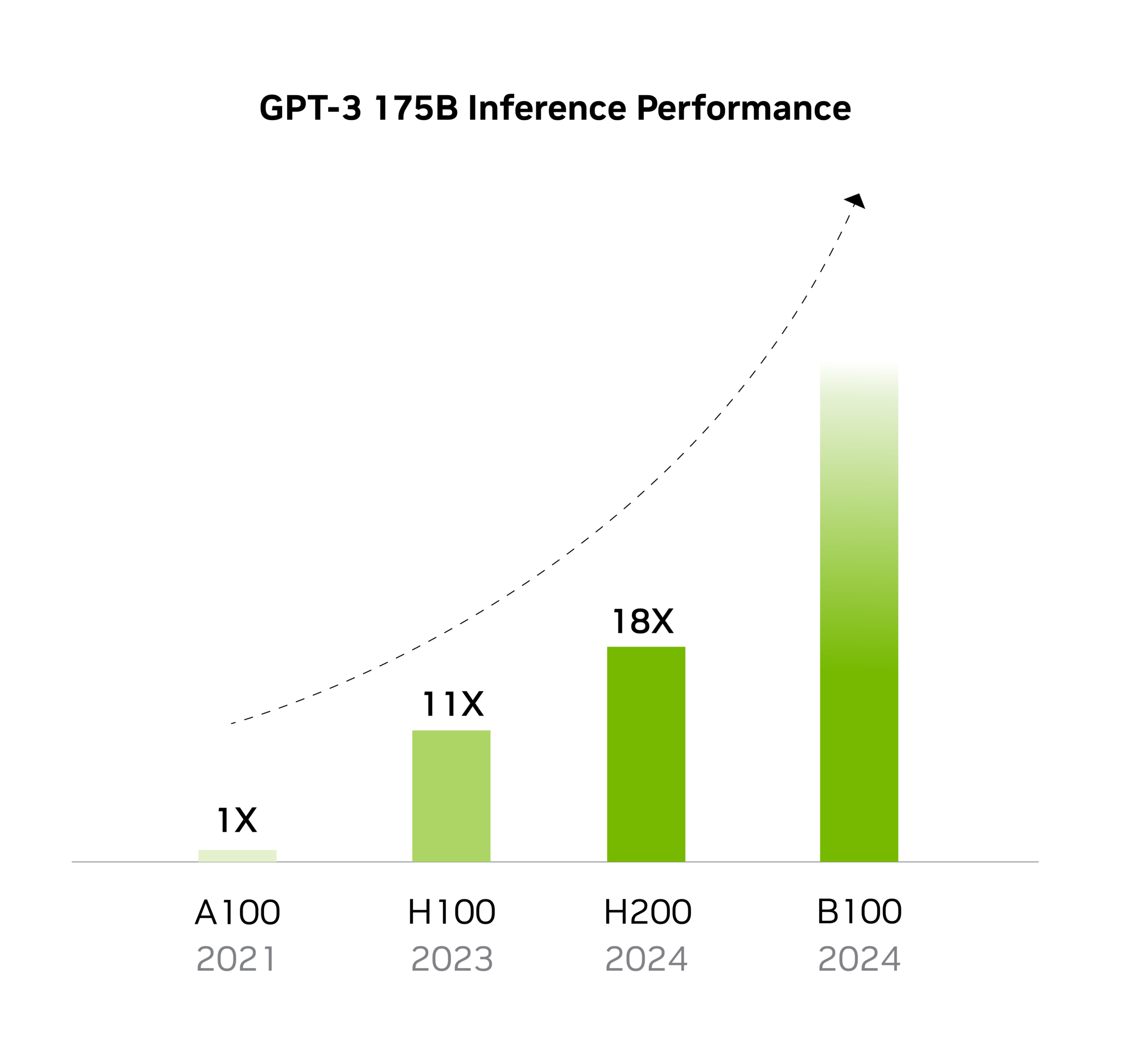

Built on the NVIDIA Hopper architecture, the NVIDIA H200 is the first GPU to offer 141 gigabytes (GB) of HBM3e memory at 4.8 terabytes per second. (This represents nearly double the capacity of the NVIDIA H100 Tensor Core GPU and 1.4% more memory bandwidth.) The H200’s larger and faster memory accelerates generative AI and LLMs, while advancing scientific computing for HPC workloads with improved energy efficiency and lower total cost of ownership.

Additional information

| Memory Type | HBM3e |

|---|---|

| GPU Architecture | Hopper |

| DisplayPort Output | No |

| Card Types | SERVER |