Description

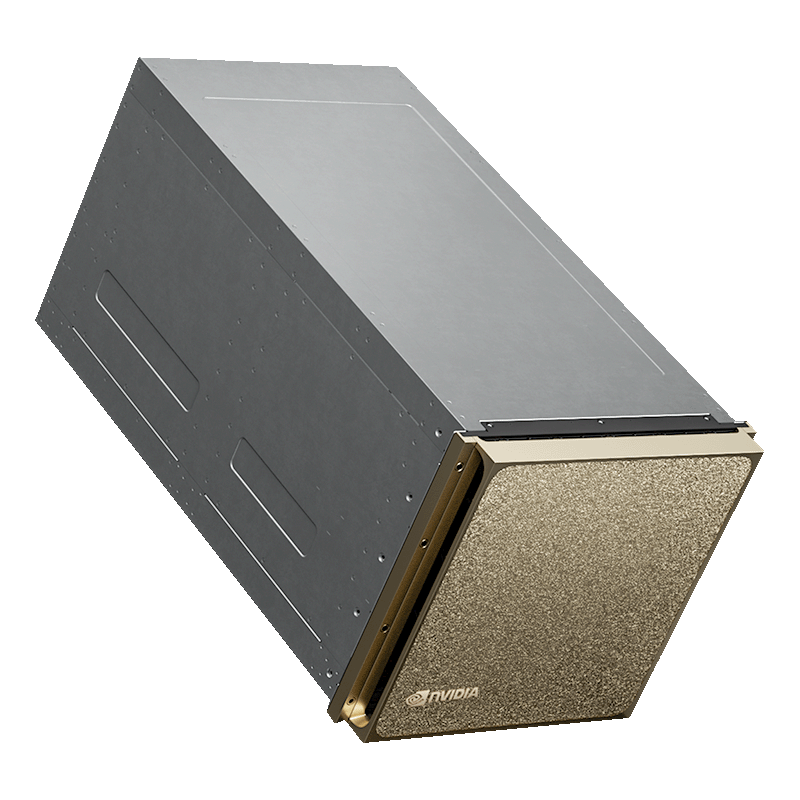

NVIDIA DGX B200 provides a powerful infrastructure for AI applications, offering unparalleled advantages in terms of both performance and sustainability.

Superior Performance

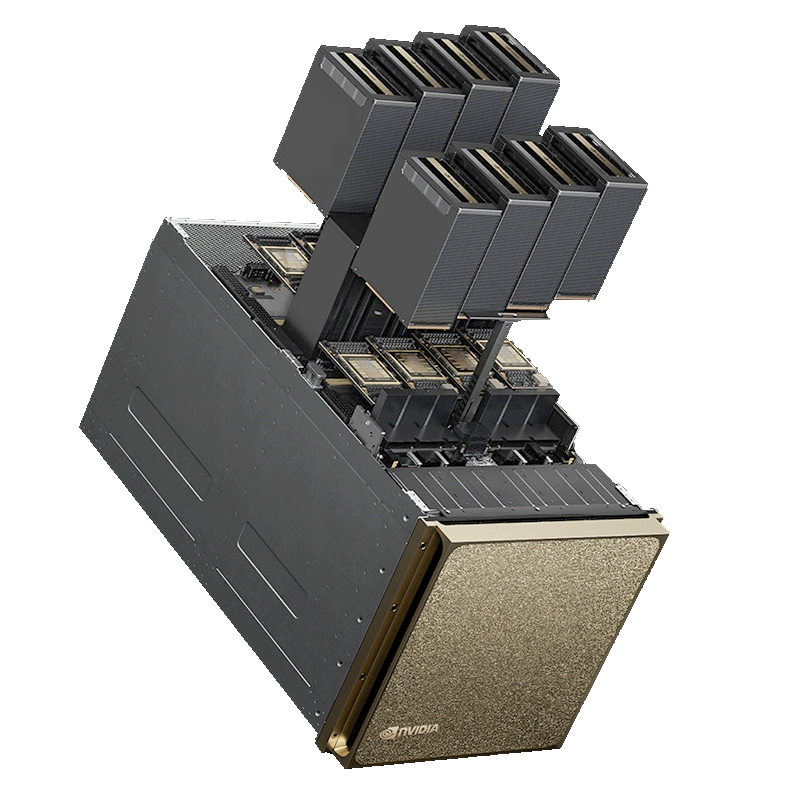

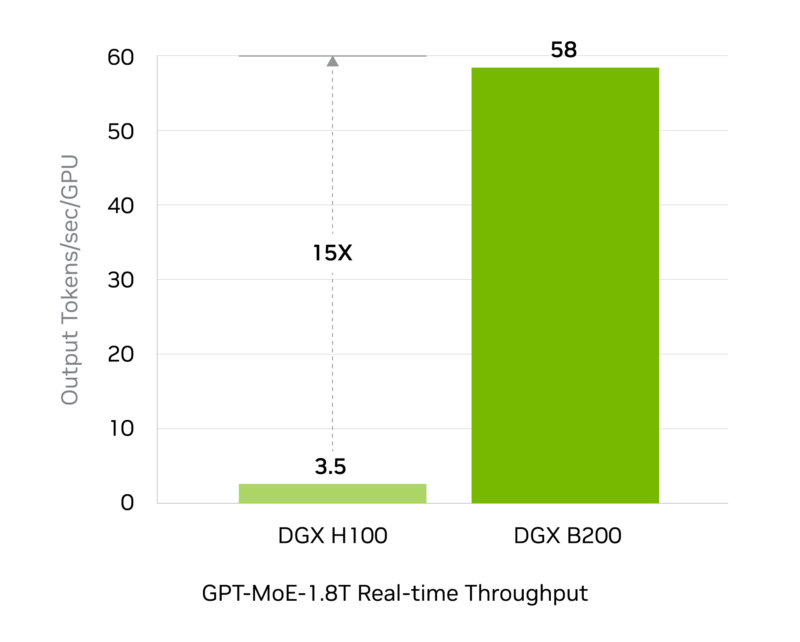

- Advanced GPU Architecture: Equipped with NVIDIA Blackwell Tensor Core GPUs, it delivers 72 petaFLOPS of instruction and 144 petaFLOPS of inference performance.

- NVLink Technology: It enables ultra-high-speed data transfer between GPUs, making it ideal for large language models (LLM) and complex workloads.

2. Efficiency and Sustainability

- Energy Efficiency: It provides up to 50% energy savings with optimum energy consumption. It is also suitable for sustainable AI projects with a maximum power consumption of 14.3 kW.

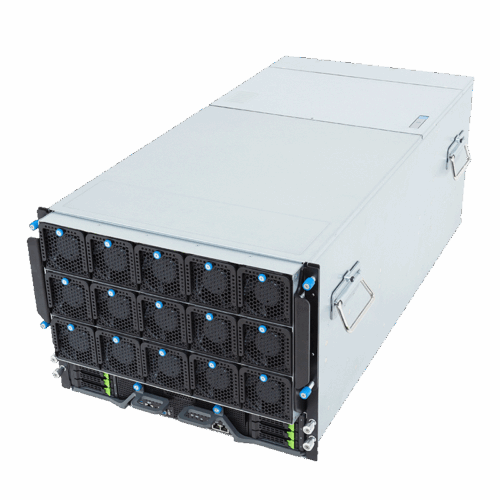

- Thermal Management: Improved thermal design ensures stable operation even during high performance.

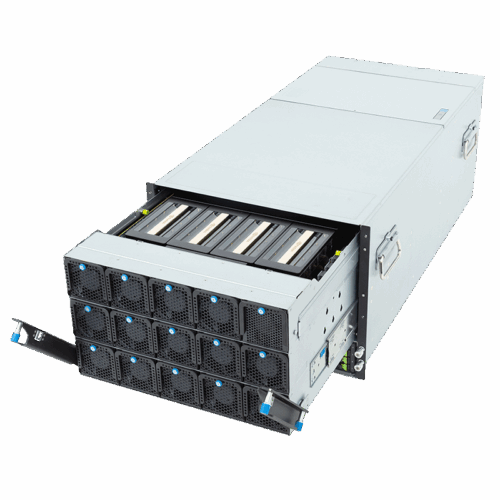

3. Flexible Infrastructure

- Storage and Memory: Up to 4 TB of system memory and 1,440 GB of GPU memory provide ample resources for complex data processing tasks.

- Modular Networking: With InfiniBand and Ethernet support up to 400 Gb/s, it meets high-speed data transfer requirements.

4. Comprehensive Software Ecosystem

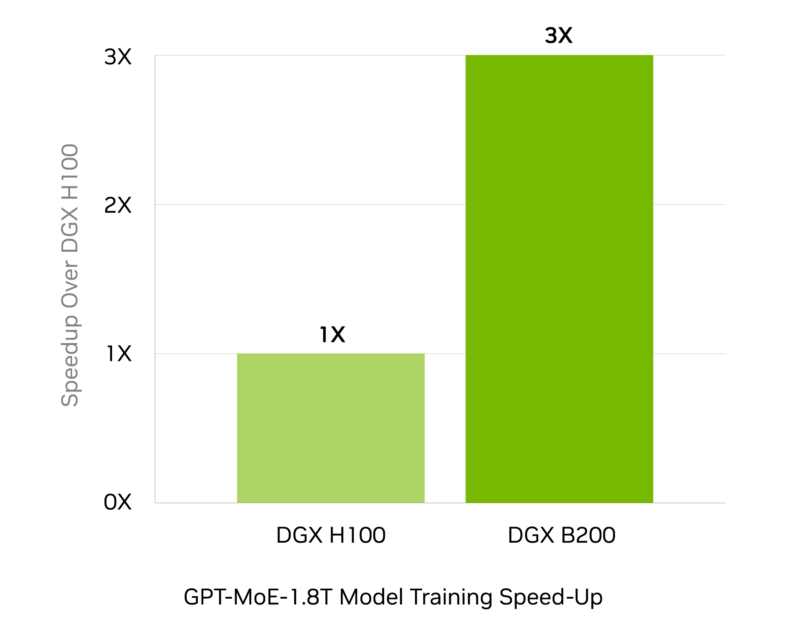

- NVIDIA AI Enterprise and Base Command: Offer software tools optimized for all AI workflows. Provides maximum efficiency in all processes, from training to inference.

5. Versatile Applications

- LLM and NLP: Designed for challenging projects such as large language models and natural language processing.

- AI Transformation: Accelerates AI-driven transformations and simplifies processes for organizations.

DGX B200 appeals to a wide range of users, from small businesses to large corporations, with its scalability and flexibility.

Additional information

| Graphics Card | B200 |

|---|---|

| GPU-GPU | SXM |

| SXM Platform | DGX |