Description

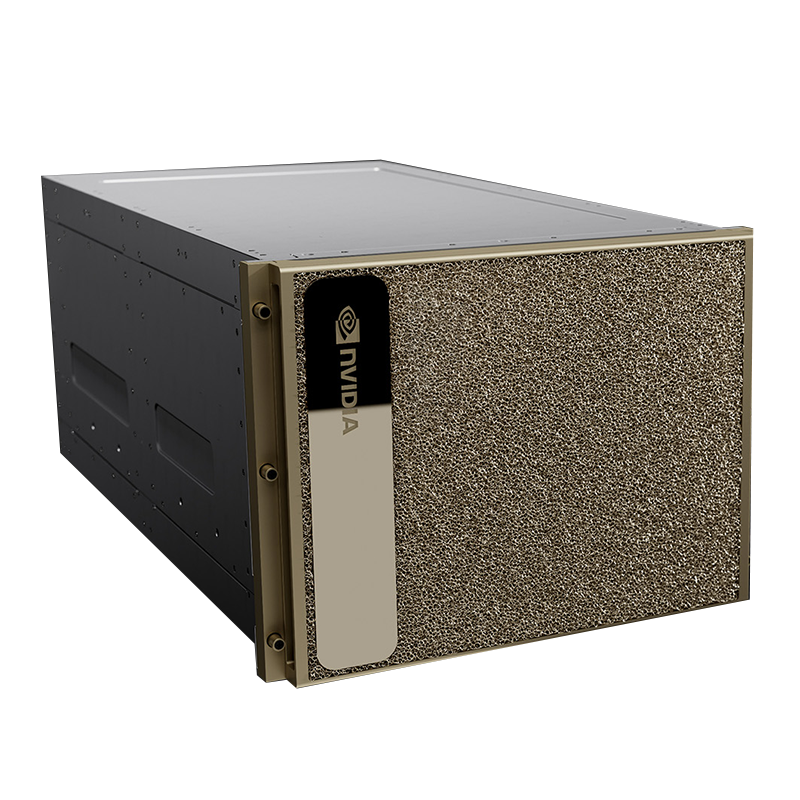

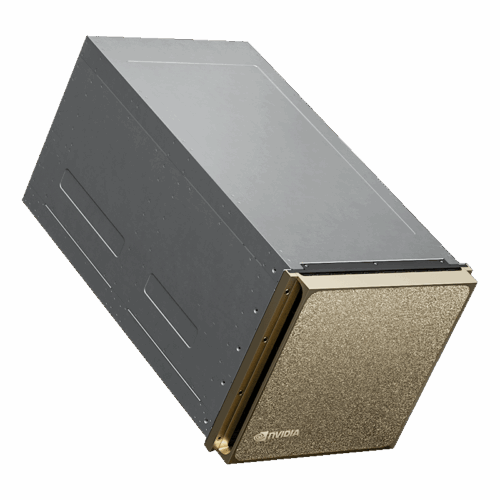

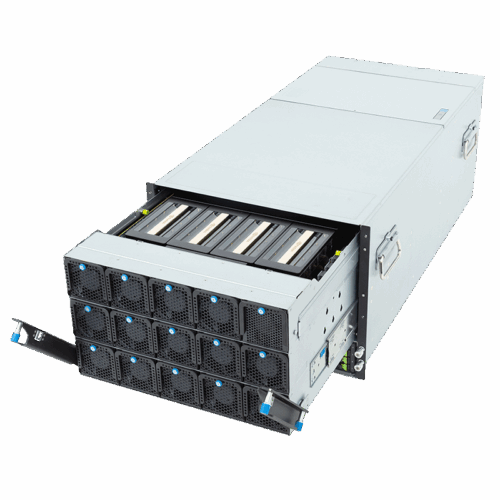

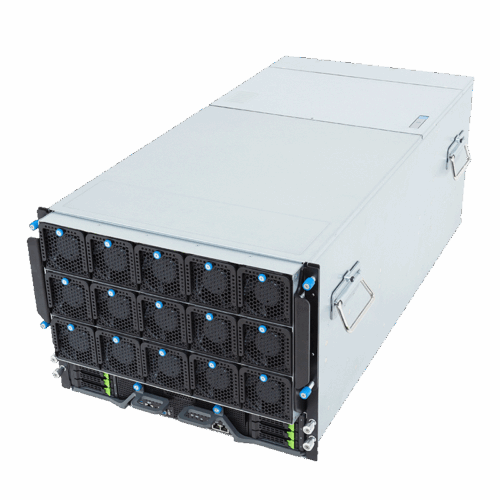

NVIDIA DGX H200 is a cutting-edge AI infrastructure solution designed for complex data processing tasks such as artificial intelligence (AI), high-performance computing (HPC), and large language models (LLM). Equipped with NVIDIA’s next-generation H200 Tensor Core GPUs, this system delivers maximum performance and energy efficiency for your AI projects.

Areas of Application

LLM and Generative AI: Optimized for AI projects such as large language models, image generation, or text production.

HPC Solutions: Ideal for intensive data processing and analysis projects.

Research and Development: Used by organizations that require high-performance computing power for scientific research.

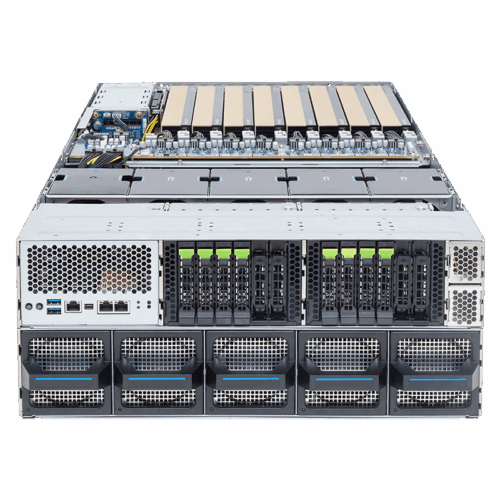

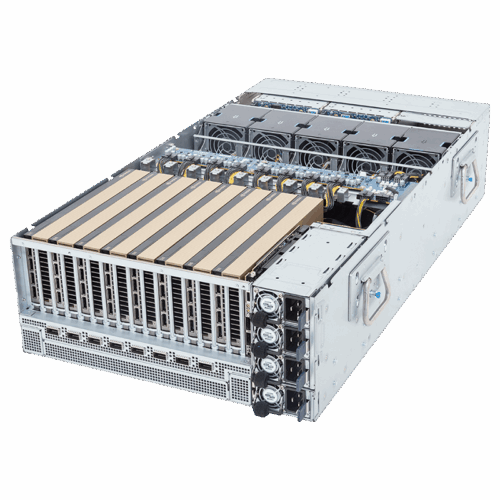

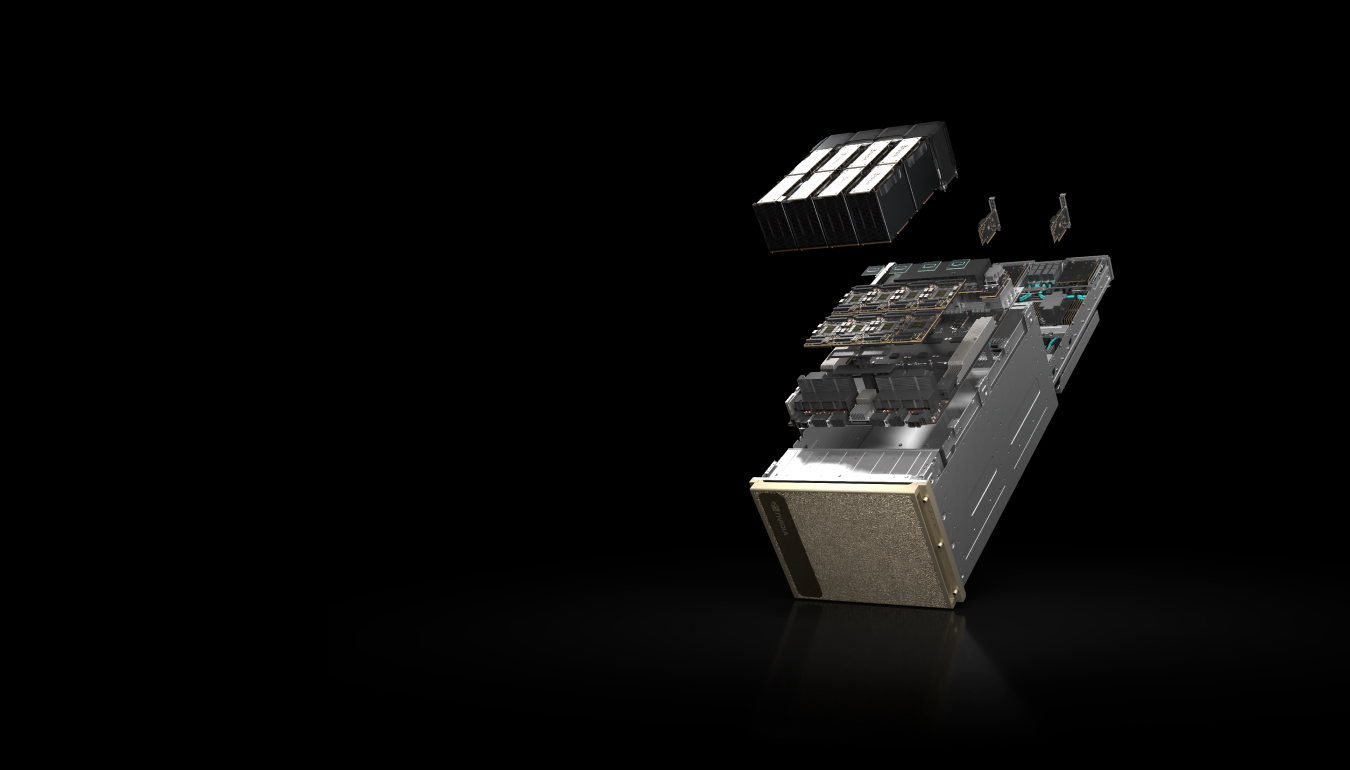

- 8x NVIDIA H200 GPUs with 1.128 GB total GPU memory.

18x NVIDIA NVLink® connections per GPU, 900 GB/s bidirectional GPU-to-GPU bandwidth. - 4x NVIDIA NVSwitches

7.2 TB/s bidirectional GPU-to-GPU bandwidth, 1.5 times faster than the previous generation. - 10x NVIDIA ConnectX®-7 400 Gb/s Network Interface

1 TB/s peak bidirectional network bandwidth - Dual Intel Xeon Platinum 8480C processors, a total of 112 cores, and 2 TB of system memory.

Powerful CPUs for the most demanding AI tasks. - 30 TB NVMe SSD

High-speed storage for maximum performance.

Why DGX H200?

DGX H200 offers a turnkey solution for AI infrastructures with its energy efficiency, superior performance, and optimized software. Users can easily access a high-performance AI infrastructure without dealing with complex integrations. This system saves time, accelerates workloads, and reduces costs in AI projects.

Additional information

| Graphics Card | H200 |

|---|---|

| GPU-GPU | SXM |

| SXM Platform | DGX |