Blueprint-Driven

Architectural Integrity

SCALABLE DESIGN INSPIRED BY NVIDIA BASEPOD & SUPERPOD REFERENCE ARCHITECTURES

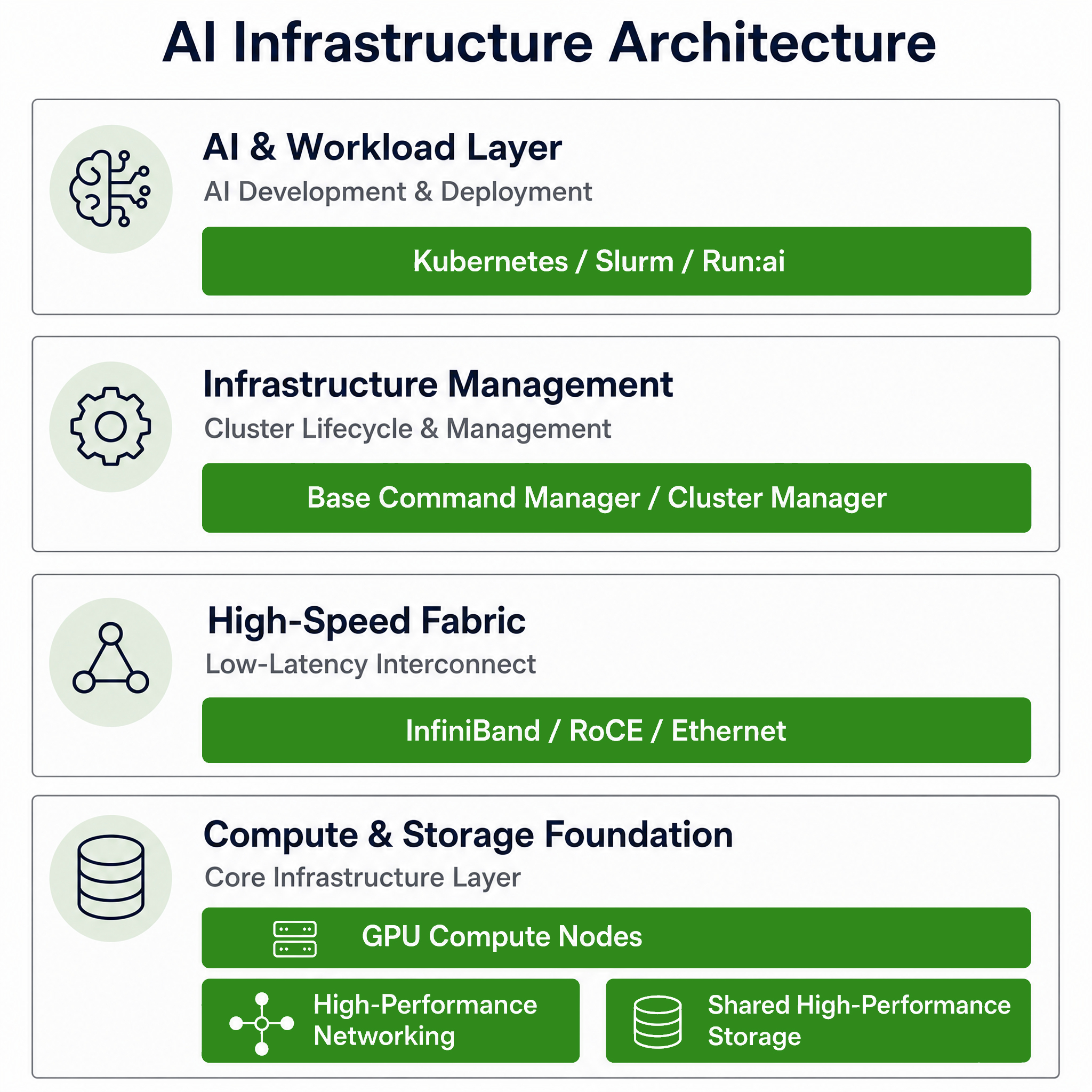

We do not assemble clusters component by component.

We design them using a blueprint-driven architecture model

inspired by proven BasePOD and SuperPOD principles.

Every deployment follows a layered, validated framework

where compute, high-speed fabric, storage, and control

plane are engineered as a unified system — not isolated

parts.

This approach eliminates architectural bottlenecks before

they appear and ensures predictable scalability from day

one.

Deployment Packages

Structured engagement models aligned with cluster scale, performance requirements, and operational maturity.

BASE

Single Rack

Best for: Proof of Concept and Small AI Pods

✔ Single rack or small pod deployment

✔ Kubernetes or Slurm installation

✔ Basic monitoring and health validation

✔ Standard Ethernet networking

✔ Initial workload validation

SCALE

Multi Rack

Best for: Growing AI Infrastructure and Multi-Rack Expansion

✔ Multi-rack architecture design

✔ InfiniBand or RoCE fabric tuning

✔ Shared or parallel storage integration

✔ Run:ai installation and GPU allocation policies

✔ Performance validation and optimization

ENTERPRISE

Mission Critical

Best for: Production-Grade AI Factories and Mission-Critical Environments

✔ High-availability control plane

✔ Dual fabric and redundancy validation

✔ Air-gapped deployment workflows

✔ Disaster recovery planning and failover validation

✔ Operational runbook and knowledge transfer

Blueprint-Driven

Architectural Integrity

SCALABLE DESIGN INSPIRED BY NVIDIA BASEPOD & SUPERPOD REFERENCE ARCHITECTURES

We do not assemble clusters component by component.

We design them using a blueprint-driven architecture model

inspired by proven BasePOD and SuperPOD principles.

Every deployment follows a layered, validated framework

where compute, high-speed fabric, storage, and control

plane are engineered as a unified system — not isolated

parts.

This approach eliminates architectural bottlenecks before

they appear and ensures predictable scalability from day

one.

Deployment Packages

Structured engagement models aligned with cluster scale, performance requirements, and operational maturity.

BASE

Single Rack

Best for: Proof of Concept and Small AI Pods

✔ Single rack or small pod deployment

✔ Kubernetes or Slurm installation

✔ Basic monitoring and health validation

✔ Standard Ethernet networking

✔ Initial workload validation

SCALE

Multi Rack

Best for: Growing AI Infrastructure and Multi-Rack Expansion

✔ Multi-rack architecture design

✔ InfiniBand or RoCE fabric tuning

✔ Shared or parallel storage integration

✔ Run:ai installation and GPU allocation policies

✔ Performance validation and optimization

ENTERPRISE

Mission Critical

Best for: Production-Grade AI Factories and Mission-Critical Environments

✔ High-availability control plane

✔ Dual fabric and redundancy validation

✔ Air-gapped deployment workflows

✔ Disaster recovery planning and failover validation

✔ Operational runbook and knowledge transfer